Knowledge

- Identify virtual switch entries in a Virtual Machine’s configuration file

- Identify virtual switch entries in the ESX/ESXi Host configuration file

- Identify CLI commands and tools used to troubleshoot vSphere networking configurations

- Identify logs used to troubleshoot network issues

Skills and Abilities

- Utilize net-dvs to troubleshoot vNetwork Distributed Switch configurations

- Utilize vicfg-* commands to troubleshoot ESX/ESXi network configurations

- Configure a network packet analyzer in a vSphere environment

- Troubleshoot Private VLANs

- Troubleshoot Service Console and vmkernel network configuration issues

- Troubleshooting related issues

- Use esxtop/resxtop to identify network performance problems

- Use CDP and/or network hints to identify connectivity issues

- Analyze troubleshooting data to determine if the root cause for a given network problem originates in the physical infrastructure or vSphere environment

Tools & learning resources

- Product Documentation

- vSphere Client

- vSphere CLI

- vicfg-*, net-dvs, resxtop/esxtop

- Eric Sloof’s Advanced Troubleshooting presentation at the Dutch VMUG

- VMware whitepaper on Troubleshooting Performance issues

- Trainsignal’s Troubleshooting for vSphere course

- TA6862 – vDS Deep Dive – Managing and Troubleshooting (VMworld 2010)

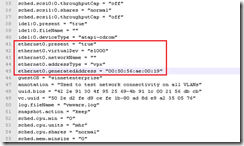

Identify virtual switch entries in a VMs configuration file

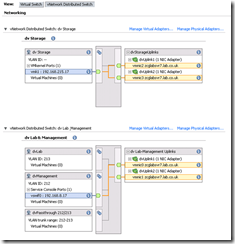

Contains both vSS and vDS entries;

In the example VM below it has three vNICs on two separate vDSs. When troubleshooting you may need to coordinate the values here with the net-dvs output on the host;

- NetworkName will show “” when on a vDS.

- The .VMX will show the dvPortID, dvPortGroupID and port.connectid used by the VM – all three values can be matched against the net-dvs output and used to check the port configuration details – load balancing, VLAN, packet statistics, security etc

NOTE: Entries are not grouped together in the .VMX file so check the whole file to ensure you see all relevant entries.

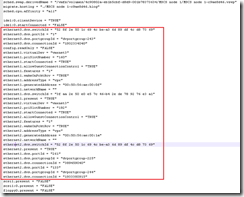

Identify virtual switch entries in the ESX/i host configuration file

The host configuration file (same file for both ESX and ESXi);

- /etc/vmware/esx.conf

Like the .VMX file it contains entries for both switch types although there are only minimal entries for the vDS. Most vDS configuration is held in a separate database and can be viewed using net-dvs (see section 6.3.7).

Command line tools for network troubleshooting

The usual suspects;

- vicfg-nics

- vicfg-vmknic

- vicfg-vswitch (-b) for CDP

- vicfg-vswif

- vicfg-route

- cat /etc/resolv.conf, /etc/hosts

- net-dvs

- ping and vmkping

Identify logs used to troubleshoot network issues

Check section 6.1 for the typical logs used for vCenter and ESX/i hosts.

Utilize net-dvs to troubleshoot vDS switch configurations

Charming! VMware put an objective in the exam which references an unsupported command and then provides next to no documentation on how to use it. So generous!

- Located in /usr/lib/vmware/bin (not in the PATH variable so just typing net-dvs won’t work)

- Can be used to see the vDS settings saved locally on an ESX/i host;

- dvSwitch ID

- dvPort assignments to VMs

- VLAN, CDP information etc

- Trainsignal’s Troubleshooting vSphere training course covers the basics of net-dvs and how to match entries to a VM’s .vmx file. Well worth the asking price.

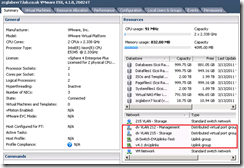

Host is out of sync with vCenter vDS configuration

If the proxy switches (ie the local configuration on the ESX/i host) get out of sync with the vDS config as held in the vCenter database you’ll get the following message for each host that’s misconfigured;

When connecting the VI Client directly to the host in question you’ll see it still has vDS network configuration even though vCenter shows nothing for this host. This shows that the host retains some of the vDS settings which may interfere with correct network functionality.

You can read some background and the solution to this problem on Eric Sloof’s blog or in VMwareKB1017558.

On another occasion the host failed while adding physical NICs to a dVS dvUplink PortGroup. This resulted in the host hanging and the vpxa agent failing. After rebooting the host I was presented with the following dVS configuration;

Steps to resolve;

- Restart management agents

- Reboot host

- Connect VI client directly to host

- Remove vDS config from host.

Configure a network packet analyser

There’s a lot to cover with a packet analyser but remember you can do this in two places and each uses a different tools;

- The guest OS (for virtual network traffic). This can use your choice of sniffer – Wireshark is a popular and free option (check this post for a Wireshark tutorial).

- The service console or tech support mode (for http://premier-pharmacy.com/product/amoxicillin/ management online pharmacy accutane traffic, vMotion etc). You’re more limited here as you can’t install extra tools.

Packet sniffing within the virtual network

- Install your choice of packet sniffer in a guest OS (get Wireshark here)

- Enable a promiscuous port for use with the VM running the packet sniffer.

NOTE: Either enable promiscuous mode on the vSwitch or create a dedicated portgroup and only enable promiscuous mode on that portgroup (slightly more secure). Check this blogpost showing how to enable promiscuous mode. - OPTIONAL: use a filter in Wireshark to track only certain types of traffic

- tcp.port eq 902

Packet sniffing within the management network

The tool used varies between ESX and ESXi although usage is identical;

- tcpdump (ESX). Can capture both vmKernel and Service Console traffic.

- tcpdump-uw (ESXi). Only works on vmKernel interfaces.

Tcpdump isn’t as full featured as Wireshark, it merely displays packets and lets you filter what to display using multiple criteria such as source IP, destination IP, ports etc. It won’t do any analysis, highlighting or protocol recognition etc, so is less user friendly. You can output to a file using ‘-w’ and open that in Wireshark for later analysis.

tcpdump -i vmk0 tcp -w /home/vi-admin/netcapture.pcap

NOTE: If you’re using SSH to connect to a host (rather than a direct console connection via ILO etc) and then running tcpdump you’ll see lots of traffic to port 22 as the screen updates are being sent to your screen! Exclude them using ‘port not 22’ on the end of the tcpdump syntax.

VMwareKB1018217 shows a sample syntax used when diagnosing issues enabling HA. VMwareKB1031186 details how to capture packets on an ESXi host.

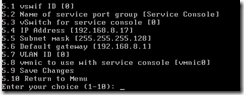

Troubleshoot vmKernel and Service Console network issues

vSphere 4.0 U2 introduced a new tool (console-setup) to make configuring the service console easier (VMware KB1022078). Prior to update 2 refer to VMwareKB1000258. For general instructions on verifying service console connectivity refer to VMwareKB1003796 (it’s the same as RHEL5 if you’re familiar with Linux).

Troubleshooting vMotion

- Check VM prerequisites

- attached CD-ROM

- persistent disks

- snapshots

- RDMs

- Not on an internal-only vSwitch (unless you’ve overridden the default for vShield)

- Check host prerequisites

- vmKernel ports

- enough memory/CPU

Network troubleshooting steps

These steps are from TA6862 – vDS Deep Dive – Managing and Troubleshooting;

- Ensure the vNIC is assigned to the correct portgroup

- Ensure the vNIC is ‘connected’

- Verify the uplinks being used by the relevant portgroup

- Is teaming correctly configured?

- Is the physical switch configured correctly for all uplinks?

- Check VLAN tagging is correct at physical switch and portgroup

- Check MTU matches between vNIC and vSwitch (or portgroup)

- Check vmknic MTU using esxcfg-vmknic -m

- Run ping -s <size> <destination IP>

Here’s a good blogpost about MTU – check the comments

- Check for dropped packets (either in esxtop or vCenter performance charts, and additionally on the Ports tab for a vDS)

- Use a packet sniffer to check traffic sent from the source is received at the destination. If not there may be issues in the physical network infrastructure

NOTE: VMwareKB1003969 covers some good general troubleshooting steps.

Performance checks

- Check jumbo frames are enabled (especially if CPU usage is high)

- Verify that VMtools is installed in all VMs

- Locate VMs with high traffic requirements on the same host so traffic goes across the local bus rather than the network

- Enable TSO in the guest OS

- Where possible use VMXNET3 drivers which optimise performance

NOTE: When configuring NIC teaming on an ESXi server and using ‘route based on IP hash’ with an Etherchannel link you can experience intermittent network access – see VMwareKB1022751 for an explanation and solution.

Using resxtop/esxtop to diagnose network issues

You can use esxtop to check the NIC teaming is working as expected. Check the traffic per vmnic, and also if any packets are being dropped.

Using CDP and network hints to troubleshoot

- Enabled by default (in listen mode) on vDS

- Disabled by default on vSS

- CLI configuration (vSS only)

- vswitch -b <vSwitch> Show CDP status for a given vSwitch

- vswitch -B both <vSwitch> Enable CDP for a given vSwitch

- GUI configuration (vDS only)

- Set on the vDS properties using the VI client

Set on the vDS properties using the VI client

These steps are from TA6862 – vDS Deep Dive – Managing and Troubleshooting;